Study Notes

Apache Kafka Study Note

Apache Kafka is an open source distributed event store and stream-processing platform. Since 2012, it has gained increasing popularity among users due to its excellent performance and flexible configuration. Today, more than 80% of all Fortune 100 companies is using Kafka.

Read more

AWS DynamoDB Study Note

DynamoDB was announced by Amazon CTO Werner Vogels on in 2012, 14 years after NoSQL was proposed in 1998. It supports key-value and document-oriented structure storage.

Read more

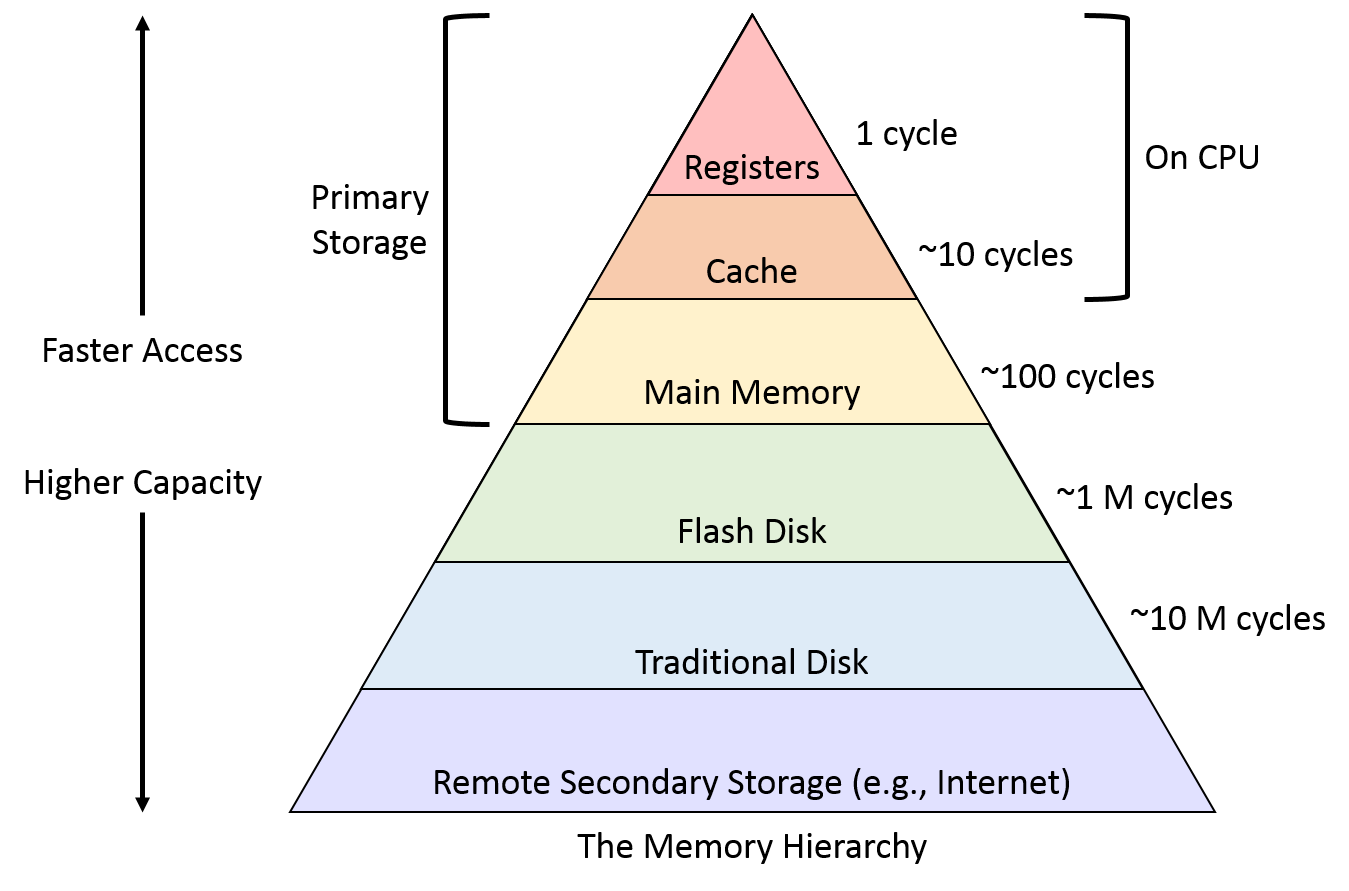

OS Memory Management

In Computer Systems, the CPU is much faster than the storage system, so ideally we want storage system read/write as fast as possible, unfortunately, the price of storage media increases exponentially with the access speed. Thus, in order to balance cost and performance, we designed a multi-layer memory hierarchy.

Read more

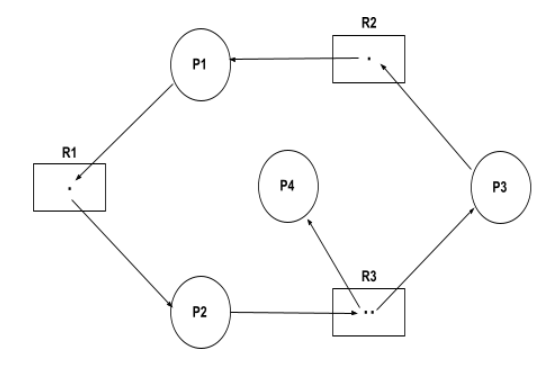

CPU Scheduling and Deadlock

CPU Scheduling is a process of determining which process will own CPU for execution while another process is on hold. The main task of CPU scheduling is to make sure that whenever the CPU remains idle, the OS at least selects one of the processes available in the ready queue for execution.

Read more

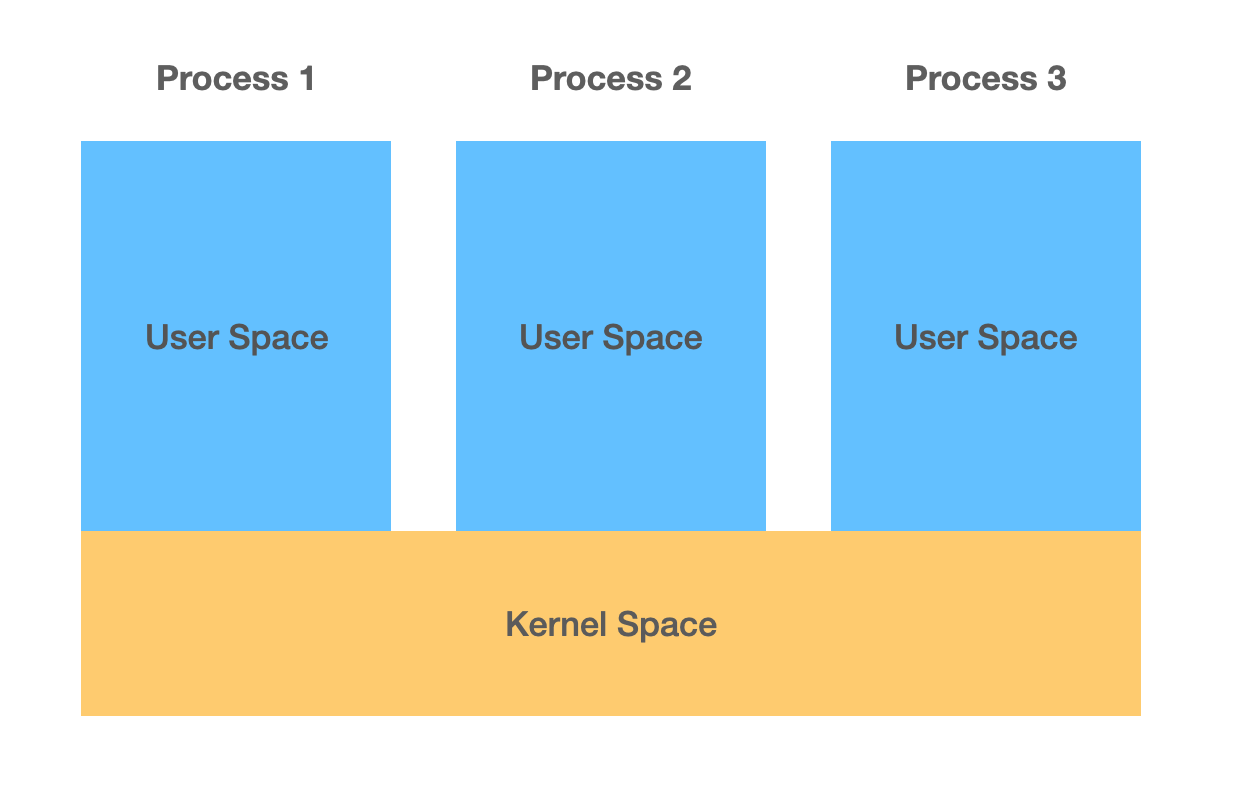

Inter-Process Communication(IPC)

In order to improve CPU utilization, and more effectively manage jobs in a multiprogramming system, the operation system(OS) abstracts the “process”. Each process has a Process Control Block(PCB*) which contains all information that OS required to manage the process, and resources in user space memory, they’re generally independent of each other, but the kernel space is shared, so communication between processes must go through the kernel.

Read more